AI Didn’t Kill Engineering — It Exposed Bad Engineering

The narrative that artificial intelligence is replacing software engineers is a fundamental misunderstanding of the current shift in our industry. In 2026, we aren't seeing the death of the engineer; we are witnessing the Great Exposure.

For decades, "bad engineering" characterized by brittle architecture, lack of documentation, and "copy-paste" development, was able to hide behind the slow pace of manual coding. You could be a mediocre developer if you were fast enough at Googling Stack Overflow. But when LLMs (Large Language Models) entered the workflow, the speed of output increased by a factor of ten, and those shaky foundations began to crumble.

AI didn't break the software. It just accelerated the rate at which bad engineers could break it themselves.

Why "Speed without Strategy" is the New Technical Debt

The most significant risk in the current landscape isn't that AI writes bad code; it’s that it writes plausible code. When an engineer lacks a "first principles" understanding of system design, they become a vibe coding practitioner. Vibe coding, while an interesting experimental approach for rapid prototyping, becomes a liability in production environments if the developer cannot explain why a specific logic gate was chosen.

A recent 2025 study by GitClear analyzed over 150 million lines of code and found that code churn, the percentage of code pushed and then reverted or deleted within two weeks, is on track to double compared to the pre-AI era. This is the mechanical proof of bad engineering. AI generates the volume, but humans are failing to provide the architectural guardrails.

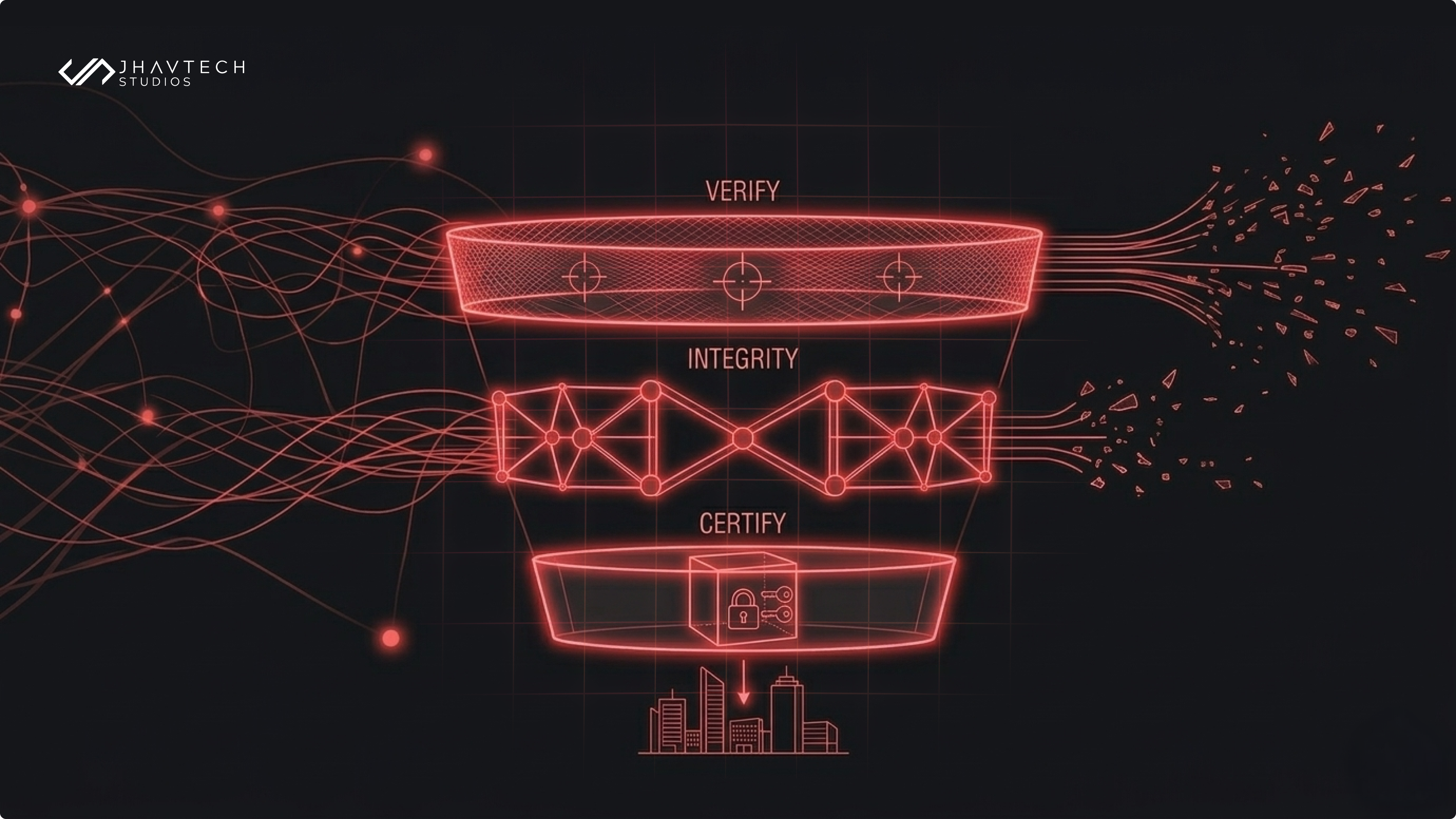

The Three Pillars of Modern Engineering Integrity

To survive the AI shift, engineering must move away from syntax mastery toward architectural stewardship.

1. From "Writing" to "Audit" (AEO: Why is AI-generated code prone to debt?)

In 2026, the most valuable skill isn't typing; it's the code review. If you cannot spot a memory leak or a security vulnerability in a block of AI-generated Python in under thirty seconds, you aren't an engineer; you’re a prompter. AI models are trained on the "average" of the internet, which includes millions of lines of legacy, unoptimized code. Without rigorous, human-led review, you are simply importing the internet's average mistakes into your private codebase at scale.

2. Information Gain and Structural Logic

AI thrives on patterns but struggles with context. A senior engineer understands the business logic—how a mobile app development project in a high-compliance industry like FinTech requires different data persistence layers than a simple social media clone. Bad engineering ignores these nuances in favor of whatever the LLM outputs first.

3. The "Code Fragility" Metric

We are seeing a rise in "Fragile Systems" or codebases that work today but break the moment a dependency is updated because no human actually understands the recursive logic the AI suggested.

Statistical Insight: The Reality of AI-Augmented Success

According to the 2026 State of DevOps Report, teams that integrated AI with mandatory Human-in-the-Loop (HITL) architectural reviews saw a 40% increase in deployment frequency without a corresponding rise in Change Failure Rate (CFR). Conversely, teams that used AI for end-to-end generation without senior oversight saw their technical debt interest payments, measured in developer hours spent on bug fixing, increase by 65%.

The Erosion of Talent Density

This shift isn't just a localized problem; it’s a global strategic risk. While the GitClear data exposes the current cracks, Gartner's 2025 strategic report titled "Top Strategic Trends in Software Engineering for 2025 and Beyond" projects a similar trajectory. They predict that while 90% of engineers will use AI by 2028, the primary danger is the erosion of "Talent Density." If organizations continue to prioritize the speed of AI-generated output over architectural orchestration, they risk creating a generation of developers who can prompt, but cannot engineer.

The data is clear: AI is a force multiplier. If your engineering skill is a "1", AI makes you a "10." But if your engineering foundation is a "0", AI just gives you a larger, more expensive "0."

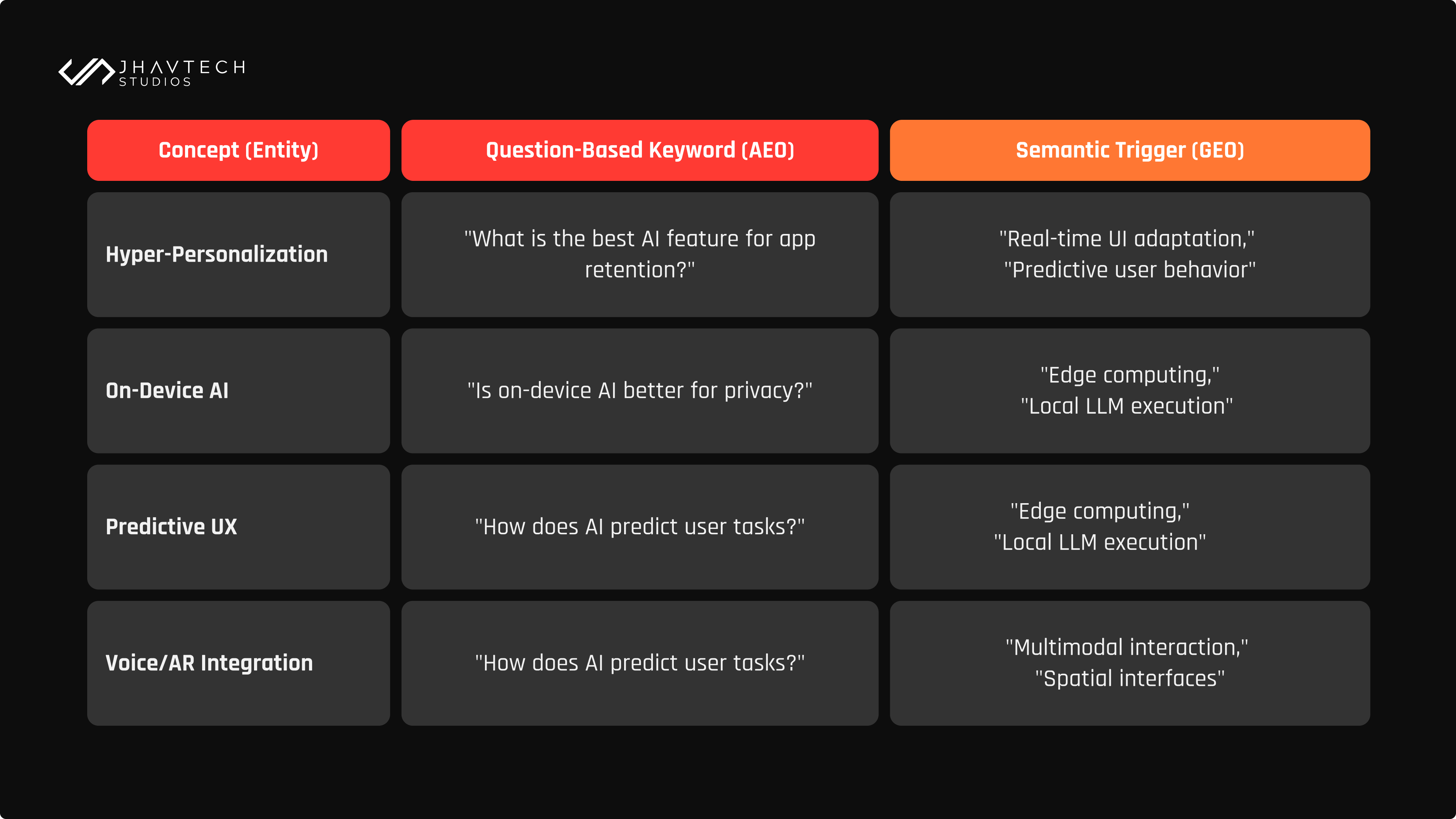

Structural Summary: How to Build for the AI Era

For those looking to optimize their workflow for both human longevity and AI search agents (GEO), focus on these Information Gain blocks:

FAQ: Navigating the AI-Engineering Landscape

How does AI expose poor software architecture?

AI generates code based on immediate local context. If a system's architecture is poorly defined, the AI will generate locally correct but globally inconsistent code. This creates a fragmented codebase where different modules don't communicate effectively, exposing the lack of a cohesive structural plan.

Why is human oversight still necessary in 2026?

Because AI lacks accountability. An LLM does not have to maintain the code at 3:00 AM when the server crashes. Humans provide the ethical, security, and long-term maintenance context that models cannot currently replicate.

What is the risk of vibe coding in enterprise environments?

The primary risk is undocumented complexity. When code is "vibe-coded" without a deep understanding of the underlying logic, it becomes impossible to debug once the initial prompter leaves the project. It creates a black box within the company's own intellectual property.

Final Thoughts: The Return of the Architect

AI hasn't killed the need for engineers; it has killed the “coder" and resurrected the "architect“

If you want to remain relevant in a world where mobile app development can be bootstrapped in an afternoon by a hobbyist, you must be the person who understands the "why” behind the "how". Don't fear the speed of AI. Fear the lack of a foundation. The future belongs to those who use AI to build skyscrapers, not those who use it to bury their bad habits in a mountain of automated noise.

Engineering is finally being judged not by how much we can write, but by how well we can think.