HappyHorse 1.0

HappyHorse 1.0 vs Seedance 2.0: Deep Performance Benchmarks

HappyHorse 1.0 vs Seedance 2.0: Deep Performance Benchmarks and 2026 Industry Analysis

HappyHorse 1.0 vs Seedance 2.0: Deep Performance Benchmarks

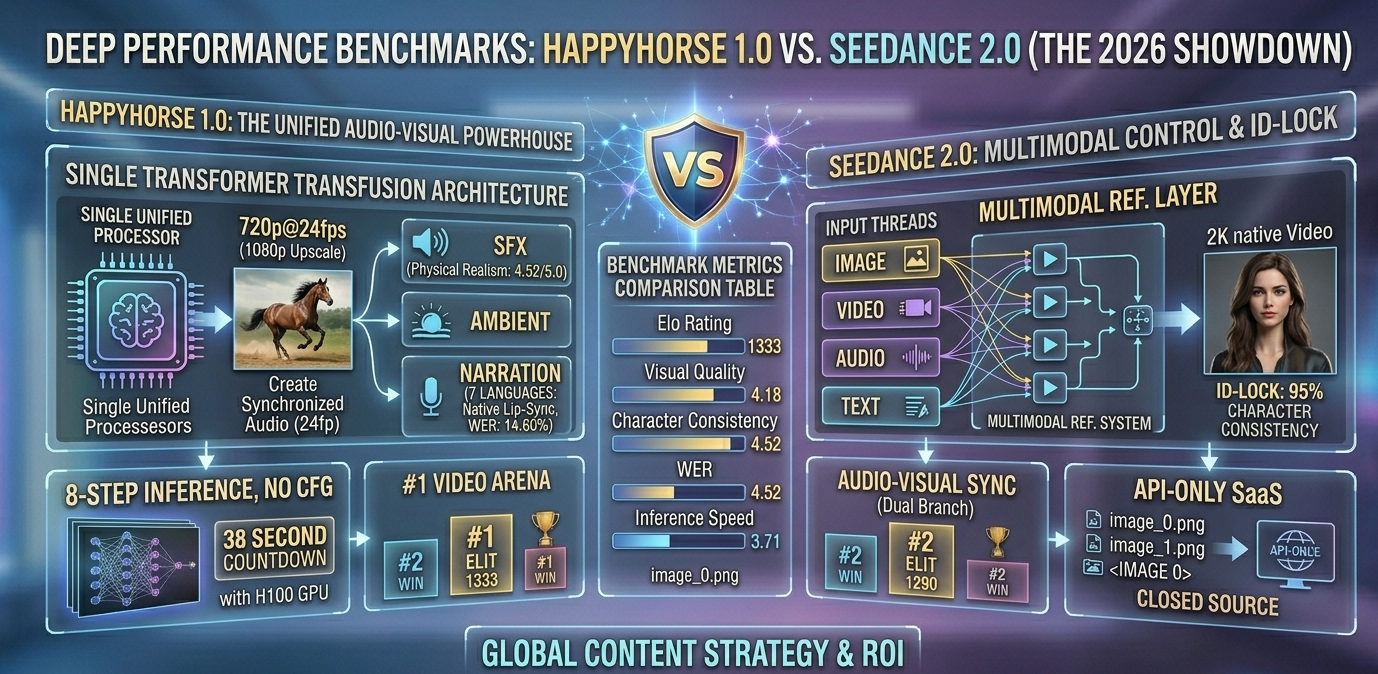

In the rapidly evolving landscape of 2026 AI video generation, the ultimate technical showdown is HappyHorse 1.0 vs Seedance 2.0. For developers, marketers, and e-commerce brands, understanding the deep performance benchmarks of these two models is critical for resource allocation. Based on the latest data from the Artificial Analysis Video Arena, HappyHorse 1.0 currently holds the #1 global ranking with an Elo score of 1333, utilizing a revolutionary 15-Billion parameter "Transfusion" architecture to natively synchronize video and audio. Conversely, ByteDance's Seedance 2.0 remains the industry standard for character consistency and highly controlled multimodal generation. This deep-dive analysis unpacks the benchmark data, architectural differences, and practical applications of both models to help you optimize your generative video strategy.

Artificial Analysis Arena: The 2026 Benchmark Data

To establish an objective comparison, we must look at standardized testing. The Artificial Analysis Video Arena utilizes blind A/B testing with over 2,000 human evaluators focusing on prompt adherence, visual fidelity, and physical realism.

When evaluating the HappyHorse 1.0 vs Seedance 2.0 deep performance benchmarks, the data reveals distinct areas of dominance for each model.

Benchmark Metric

HappyHorse 1.0 (15B)

Seedance 2.0

Competitive Advantage

Overall Elo Rating

1333 (#1)

1290 (#2)

HappyHorse (+43 Points)

Visual Quality (out of 5)

4.80

4.75

HappyHorse

Character Consistency

88%

95% (ID-Lock)

Seedance 2.0

Physics/Realism (out of 5)

4.52

4.61

Seedance 2.0

Word Error Rate (Audio)

14.60%

~18.50%

HappyHorse

Inference Time (1080p)

~38 Seconds

~90 Seconds

HappyHorse (2.3x Faster)

Key Takeaway: HappyHorse 1.0 excels in raw generation speed, audio synchronization, and overall visual quality, earning it the top spot. Seedance 2.0, however, maintains a tight grip on physical realism and character identity tracking.

Architectural Paradigms: Transfusion vs. Multimodal Latent Space

The performance disparities between these two models stem directly from their foundational architectures.

HappyHorse 1.0: The Single Transformer "Transfusion"

HappyHorse 1.0 deviates from traditional diffusion models by utilizing a unified Transfusion architecture.

How it works: Instead of generating visual latents and then relying on a secondary model to "guess" the audio, HappyHorse processes video frames and audio waveforms within the exact same Transformer attention mechanism.

The Benefit: If the prompt specifies "a glass shattering on a tile floor," the visual impact and the audio frequency peak are calculated simultaneously. This eliminates the micro-second delays that plague post-production AI dubbing.

Seedance 2.0: Multimodal Control and The ID-Lock

Seedance 2.0 operates on a highly refined, proprietary multimodal latent space, optimized specifically for short-form social media (like TikTok and Reels).

How it works: Seedance allows for deeply layered inputs (Image + Video + Text + Audio). Its standout feature is the Reference Layer (ID-Lock).

The Benefit: Users can upload three images of a human model or a product. The AI creates a persistent mathematical anchor for that identity, ensuring the face, clothing, or branding does not morph when the camera angle changes.

Native Audio Synchronization: The Conversion Multiplier

In 2026, silent AI videos or those with generic background music suffer from massive viewer drop-off. The audio capabilities are a major focal point of the HappyHorse 1.0 vs Seedance 2.0 deep performance benchmarks.

The HappyHorse Approach: It features true native generation for Sound Effects (SFX), Ambient audio, and Narration. Impressively, it supports native lip-syncing across seven languages (Mandarin, Cantonese, English, Japanese, Korean, German, and French). This makes it an unparalleled tool for global localization.

The Seedance Approach: Seedance utilizes a "Dual-Branch" audio sync. You upload a pre-recorded audio file, and the model mathematically maps the avatar's micro-expressions and lip movements to the sound waves. It is highly accurate but requires a two-step workflow.

Inference Speed and Compute Costs (ROI Analysis)

For SaaS platforms, API integrators, and e-commerce agencies, generation speed dictates profitability.

HappyHorse's 8-Step Advantage: Through aggressive model distillation, HappyHorse 1.0 has eliminated the need for Classifier-Free Guidance (CFG). It achieves high-fidelity 720p@24fps (upscalable to 1080p) in just 8 inference steps. On an NVIDIA H100 GPU, this takes approximately 38 seconds. Because it is completely open-source, agencies can deploy it locally, driving the marginal cost per video down to pennies.

Seedance's Compute Demands: As a closed-source SaaS model, Seedance requires API calls to ByteDance's servers. While it generates stunning native 2K resolution, the complex multimodal processing generally takes 90 to 120 seconds. Users must factor in subscription costs and API token pricing, which scales linearly with production volume.

Practical Application: Which Model Should You Use?

HappyHorse 1.0 vs Seedance 2.0: Deep Performance Benchmarks

Choosing between these two models depends entirely on your production goals.

Use HappyHorse 1.0 If:

You require mass localization: You need to launch a product in Europe, Asia, and the US simultaneously and need native-sounding narration in local languages.

Speed is your primary metric: You are A/B testing hundreds of creative hooks daily for TikTok Shop and need instant generation without API bottlenecks.

You prioritize sensory immersion: Your ad relies heavily on ASMR elements (sizzling food, mechanical clicks) that require perfect physical sync.

Use Seedance 2.0 If:

Brand consistency is mandatory: You are running a series of ads featuring a specific "AI Influencer" or a highly detailed branded product, where zero visual hallucination is acceptable.

You are working from complex video references: You want to extract the exact motion path and pacing of a viral video and apply it to a new character (Viral Cloning).

Leveraging These Models via Aggregator Platforms

For many TikTok sellers and marketers, deploying open-source models locally or managing multiple API subscriptions is technically prohibitive. This is where platforms like CreatOK.ai bridge the gap. By acting as a multimodal smart selector, these tools allow users to input a prompt, and the system automatically routes it to HappyHorse for an audio-heavy ad, or Seedance 2.0 for a character-driven narrative. Utilizing a unified dashboard ensures creators get the best of both benchmark leaders without the technical overhead.

H2: FAQ Section

Q1: What are the deep performance benchmarks measuring?

A: Benchmarks like the Artificial Analysis Arena measure AI video models across several criteria, including visual fidelity, text-to-video alignment, physics realism, audio synchronization (Word Error Rate), and generation speed.

Q2: Why did HappyHorse 1.0 score higher than Seedance 2.0 in the Elo ratings?

A: HappyHorse achieved an Elo of 1333 primarily due to its breakthrough "Transfusion" architecture, which allows for perfect native audio-visual sync and incredibly fast 8-step inference, leading to higher human evaluator preference.

Q3: Is HappyHorse 1.0 an open-source model?

A: Yes, HappyHorse 1.0 is completely open-source. This includes the 15B base model, the distilled model for faster inference, the super-resolution module, and the complete inference code.

Q4: Does Seedance 2.0 have native audio?

A: Seedance uses a dual-branch system. While it is excellent at lip-syncing to uploaded audio tracks, it does not natively generate the audio track simultaneously from a text prompt in the same way HappyHorse does.

Q5: Which model is better for TikTok e-commerce ads?

A: If you need to rapidly test multiple variations and localized languages at a low cost, HappyHorse is superior. If your ad centers around a specific, recognizable human spokesperson that must remain consistent, Seedance 2.0 is the better choice.

Q6: What is the inference speed of HappyHorse 1.0?

A: Using its distilled 8-step inference mode without CFG, HappyHorse can generate a 5-second video at 1080p resolution in approximately 38 seconds on H100 hardware.